White Label Semantics

The framework relies heavily on Integral theory which probably came out of Gnostic traditions and was popularised by Ken Wilbur. If you know these spaces you probably already understand the system, if not let’s get familiar.

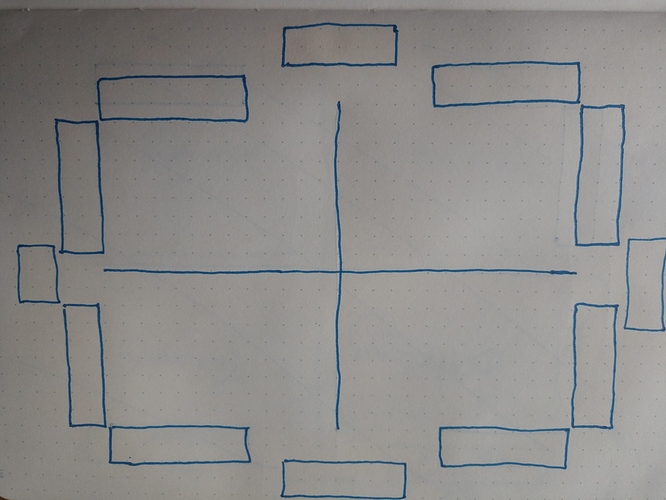

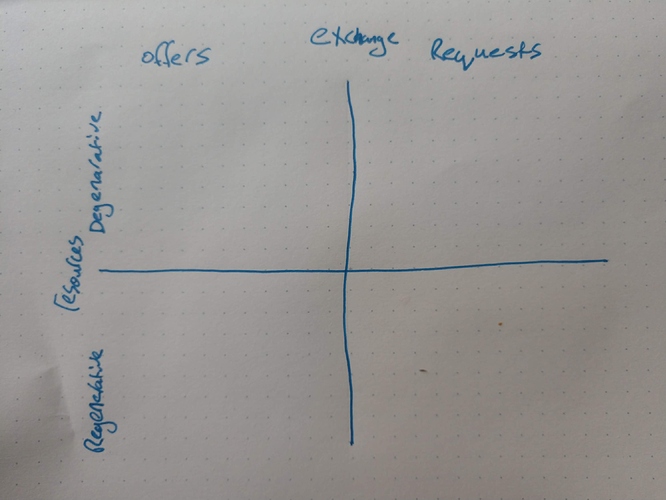

Start with this blank visualisation:

We have labels along each axis at the top, bottom, left and right.

The “name space” at the top and bottom are for the same word (reduce duplicated info if you like).

The “name space” at the left and right are for the same word (reduce duplicated info if you like).

These words are then split into opposing derivatives along the top and sides.

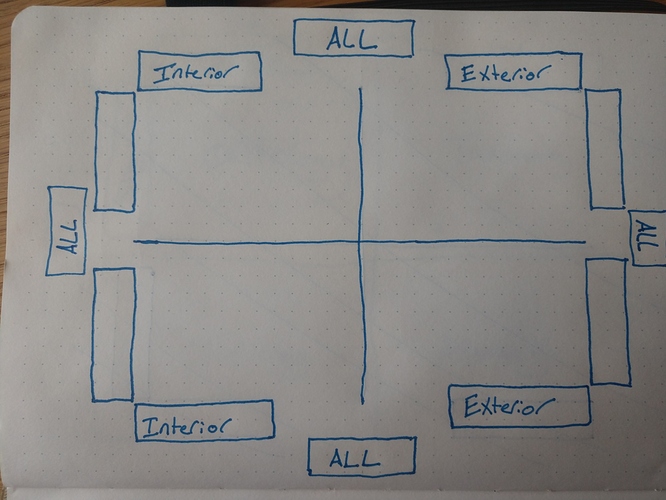

In the following example lets semantically define a subjective interpretation of everything by choosing the word “All” for each axis just with different “framings”. We then split the first “All” into opposing words, this example uses “Interior/Exterior” along the top/bottom rows:

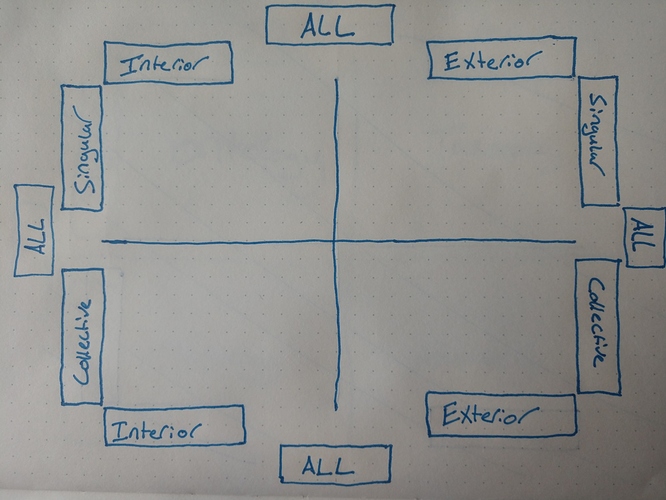

Splitting up the second “All” label from another frame this example uses individual and group:

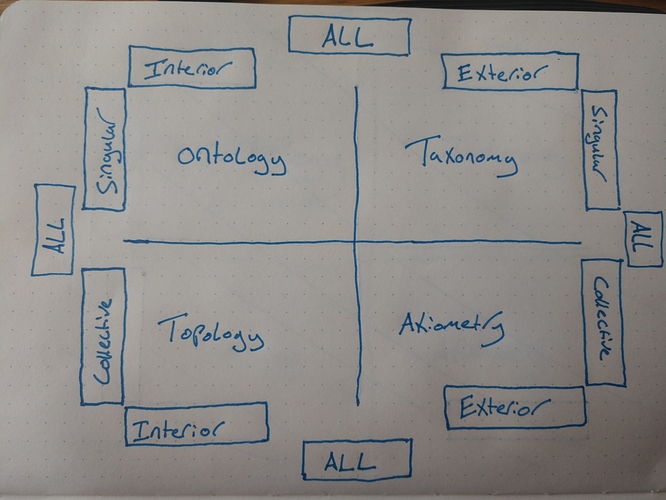

We now have two differently framed definitions of “All”. There is a blank space in the window so using our words matrix we can now fill in the panes with integrals:

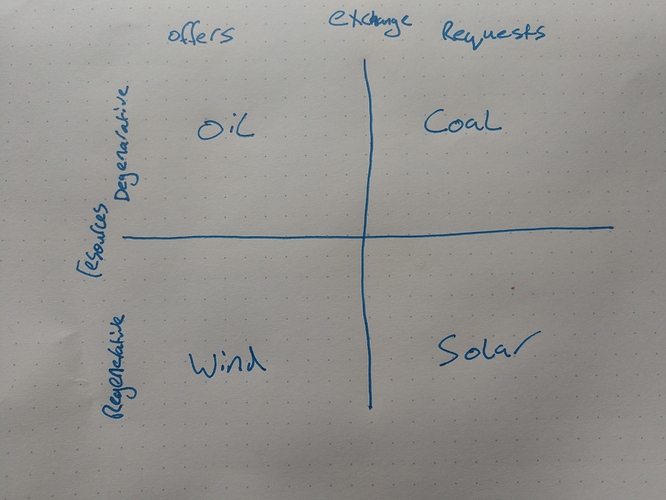

If we choose other words as our source we get different compositions. We could frame “resources” as degenerative or regenerative and “exchange” as offers and requests. Which would look like this:

We can now place objects in the windows to semantically define them. Heres one breakdown for resource exchange:

If two users choose the same name spaces that are composed from the same dualisms we have a semantic definition we can agree on. A degree of correlation can also be inferred from any name space composed of alternative derivatives (e.g. replace exchange with communications and then offers/requests with proposals/agreements and you have a correlated space)

Original hack.md doc for reference. Thoughts by myself and @instagaian.